As artificial intelligence continues to flourish worldwide, building green, open, and sustainable infrastructure while improving energy efficiency has become a vital component of the UN's Sustainable Development Goal (SDG) of “Industry, Innovation and Infrastructure.” In the Asia-Pacific region, integrating fragmented computing resources and delivering high-efficiency, low-cost AI services for broader accessibility has become an urgent technological challenge.

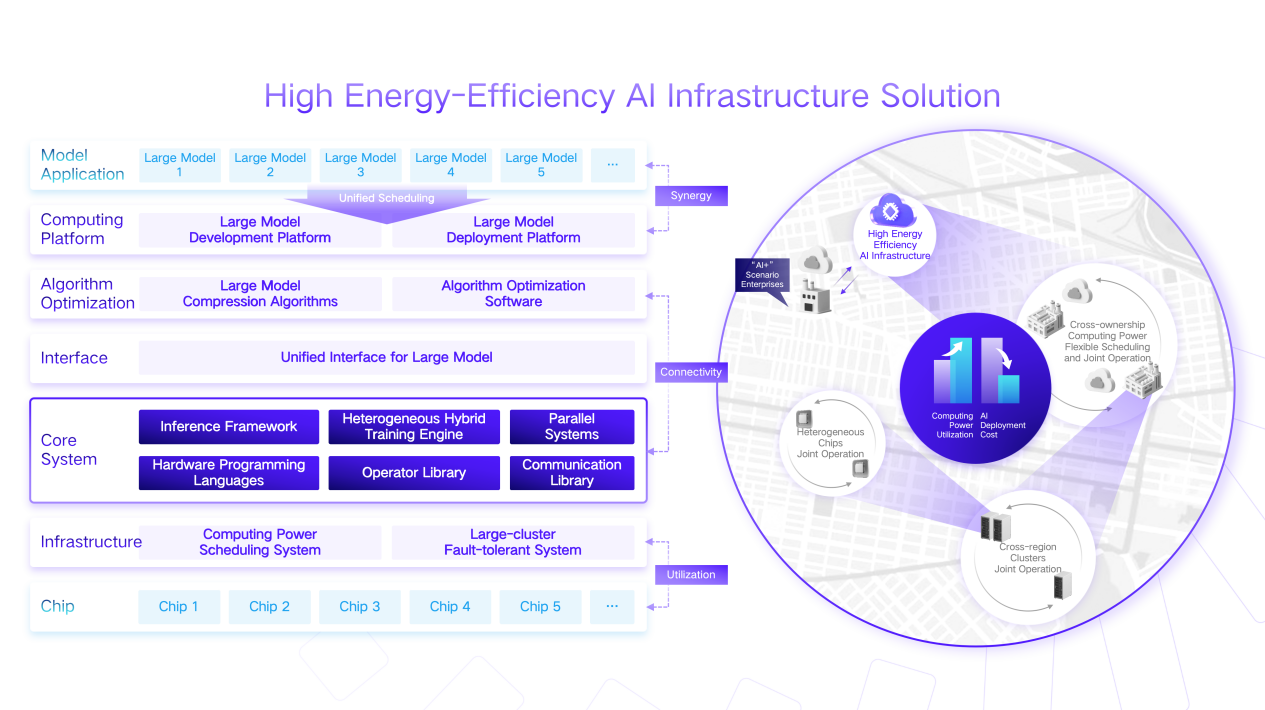

Professor Wang Yu’s team from the Department of Electronic Engineering of Tsinghua University will present their answer: a high-efficiency AI infrastructure solution. By integrating distributed and heterogeneous computing hardware and applying multi-level hardware–software co-optimization— algorithm design, model compression, operator optimization, and architecture design—the solution improves overall compute utilization and infrastructure resilience significantly. It addresses the energy-efficiency challenges of AI computing effectively and offers an innovative technological pathway toward sustainable infrastructure.

Computing Resource Integration: Enabling Cross-Domain Collaboration with “Esperanto”

AI computing encompasses highly diverse application scenarios—from real-time inference to large-scale training, extending to service robotics and intelligent vehicle networks. Hardware solutions are equally heterogeneous: general-purpose CPUs, massively parallel GPUs, programmable and energy-efficient FPGAs, and ASICs customized for specific algorithms. These devices vary significantly in type, performance, and deployment location.

To bridge the gap between rapidly growing AI computing demand and hardware performance improvements, Professor Wang Yu’s team proposed an integrated framework capable of orchestrating heterogeneous hardware across regions. The system supports automatic routing from applications to hardware, mapping N types of application frameworks onto M types of hardware platforms and dynamically routing tasks to the most suitable hardware based on scenario-specific requirements. It also integrates diverse computing resources across regions, fully supporting AI application scenarios.

In 2024, through their “HETHUB” project—a heterogeneous distributed hybrid training system for LLMs—the research team achieved a breakthrough in cross-chip hybrid training across six different chip brands. By jointly training models using four chips (Huawei Ascend, Iluvatar Codex, Metax, and Moore Threads) alongside chips from AMD and NVIDIA, achieved 97.6% of homogeneous training’s compute utilization, supporting large models with up to 70 billion parameters.

This achievement has been described as creating “Esperanto” among heterogeneous chips—like a translator fluent in multiple languages—enabling chips with different architectures to understand one another’s outputs and requirements. During training, the system can also predict the speed and performance of each chip on specific tasks, allocating tasks intelligently and improving overall utilization.

On this basis, the team further innovated parallel orchestration mechanisms that enabled joint training across data centers separated by more than 2,000 kilometers, even with communication bandwidth below 1 GB/s. This allows small and medium-sized enterprises to leverage limited edge computing resources in collaboration with abundant cloud computing power, enabling high-value data assets to remain local while unlocking broader AI potential.

Hardware–Software Co-Optimization: Maximizing the Efficiency of AI Computing

The Asia-Pacific region is rich in clean energy resources, with vast offshore wind and hydropower potential, and serves as a hub for global submarine cable networks. Rapid economic growth has driven an explosion of AI applications, intensifying tensions between electricity supply constraints and the shortage of intelligent computing centers.

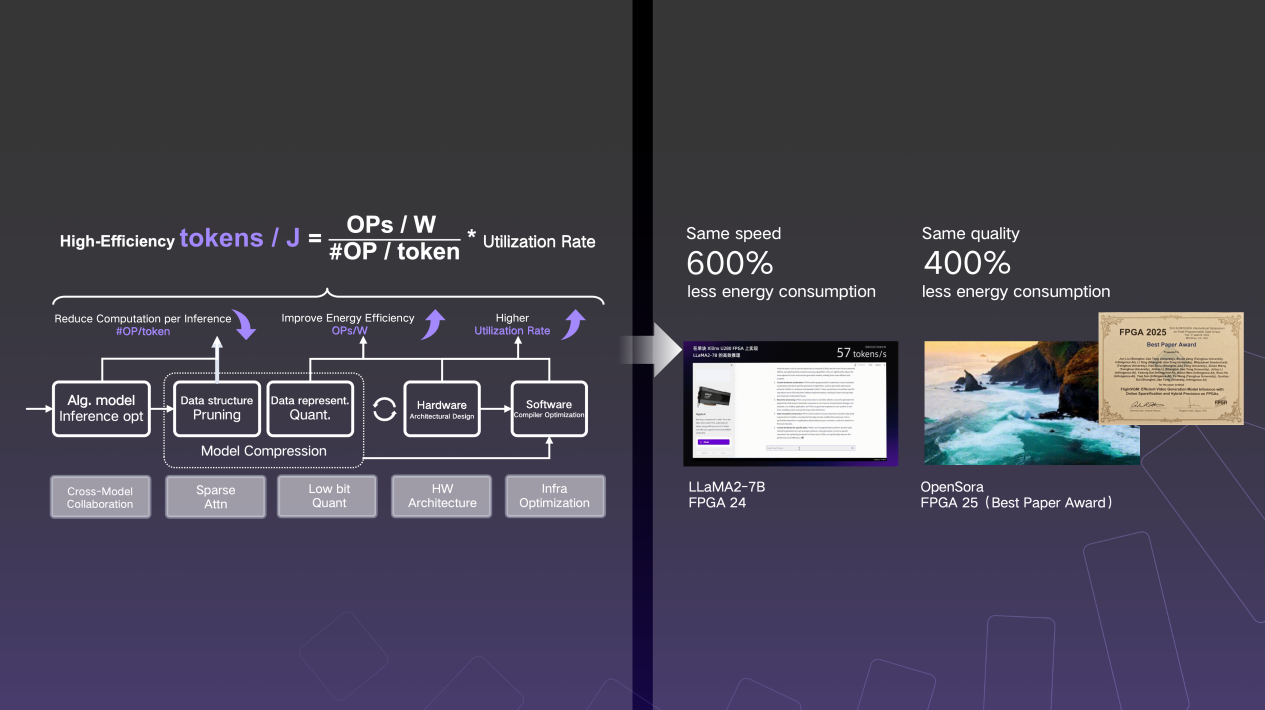

How can AI computation consume less energy without compromising performance? The team’s solution lies in refined hardware–software co-optimization. By integrating algorithm design, model compression, operator optimization, and architectural innovations, they enhance energy efficiency at every node, enabling more sustainable computing.

In practical deployments, through joint hardware–software design for sparse computing, the team achieved a 13× increase in computational efficiency and a 600% reduction in energy consumption for text-to-text applications, and a 27× increase in efficiency with a 400% reduction in energy consumption for text-to-image tasks—without sacrificing model performance.

Their work was accepted at the 2024 and 2025 editions of the FPGA Conference, the premier international conference in reconfigurable computing. In 2025, their video generation large-model inference IP, "FlightVGM," achieved the first efficient inference of a video generation model on reconfigurable logic integrated circuits. With an entirely China-based research team, the work won the Best Paper Award at the FPGA Conference.

According to China Science Daily, this marked the first time the FPGA Conference awarded its Best Paper to a research project fully led by a mainland Chinese team, and the first time a single-country team from the Asia-Pacific region received this honor.

Rather than simply maximizing “computations per joule,” the high-efficiency AI system optimizes “effective tokens processed per joule (Tokens/J)”—a more meaningful metric for greener and more sustainable AI infrastructure in the era of AI.

Cost Reduction and Inclusivity: Making AI Affordable for Underdeveloped Regions

The cost of AI services has long been a barrier to technological inclusivity. In many developing regions, expensive computing resources prevent local enterprises and institutions from adopting AI. The research team, together with its industrial spin-off company Infinigence AI, aims to reduce AI costs by three to four orders of magnitude. This is not merely price cutting; it represents systemic optimization of infrastructure to enhance efficiency and stability —from energy conversion to intelligent service delivery.

The infrastructure system developed by Infinigence AI team has already been validated in multiple regions across China, including deployments at Shanghai Xuhui Foundation Model Innovation Center Computing Power Ecosystem Platform, Beijing Haidian Public Computing Power Service Platform, Zhejiang Hangzhou Computing Power Resource Service Platform, and the Shanghai Jing’an AI+ Audiovisual Public Service Platform. These projects have established a replicable infrastructure service model, with service capabilities covering nearly a thousand research institutions and AI enterprises.

These platforms share a common characteristic: transforming computing services from simple resource transactions into full industrial ecosystem engines. SMEs no longer need to purchase expensive hardware; instead, they access AI services on demand. Tasks with different priorities are assigned to computing resources of varying cost levels, and training and inference workloads are flexibly scheduled according to time sensitivity. When the cost of interacting with AI drops from several yuan per interaction to nearly negligible levels, many previously “impossible” tasks become feasible—illustrating the inclusive value created by cost reduction.

For economically underdeveloped regions, this model offers a replicable pathway. Instead of investing heavily in massive data centers, regions can build distributed infrastructure that connects local energy and resource hubs, gradually developing digital service systems tailored to local needs.

From Tsinghua to the World: The Inclusive Power of Tech

From a lab project to industrial solutions, this achievement has moved beyond campus and into diverse real-world applications. It represents not only technological innovation but also a development philosophy: combining advanced technology with high-quality industrial models to deliver state-of-the-art AI services at affordable costs to those who need them most. The solution will demonstrate how technology can carry warmth and contribute to achieving the SDG vision of sustainable “Industry, Innovation and Infrastructure. ”As high-efficiency AI systems continue to evolve and proliferate, we anticipate that AI infrastructure development across the Asia-Pacific region will move toward a more balanced, greener, and more inclusive digital future.

In recent years, the Department of Electronic Engineering at Tsinghua University has maintained in-depth exchanges with UN agencies to explore how cutting-edge technologies can empower the Sustainable Development Goals (SDGs). In October 2024, representatives from five international organizations—including the International Telecommunication Union (ITU) and the World Health Organization (WHO)—visited the department, where faculty and students presented innovations in AI and big data platforms applied to urban planning, smart transportation, and personalized healthcare. In October 2025, Ms. Armida Salsiah Alisjahbana, Executive Secretary of the United Nations Economic and Social Commission for Asia and the Pacific (UNESCAP), visited Tsinghua’s Department of Electronic Engineering and observed demonstrations of humanoid robots, bionic faces, and urban simulators. The visit offered insight into how the department translates real-world needs into tangible AI-powered solutions for social good.

From February 24 to 27, 2026, Tsinghua University will be invited to participate in the 13th Asia-Pacific Forum on Sustainable Development (APFSD) in Bangkok, Thailand, where it will further share its SDG-driven technological solutions on an international stage.